This paper captures something profound about what's actually happening when you prompt.

You're not telling the model what to say. You're restricting the probability space of what it might say.

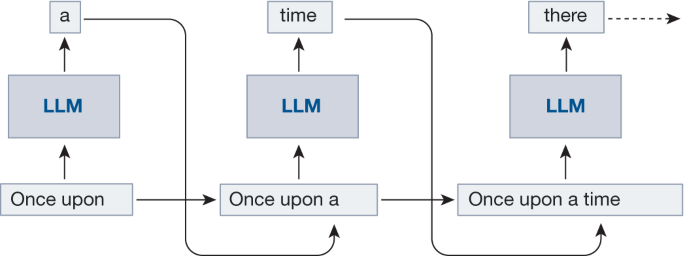

Before any prompt, the model exists in a state of radical possibility—it can produce almost anything. Every word you add to the context removes possible next-word predictions. Your prompt is, in a sense, a filter, not an instruction.

Consider 20 Questions as an illustration.

If you say:

Let's play 20 questions. But it has to be an animal.

The model doesn't secretly pick "elephant" and wait for you to guess. It holds all animals simultaneously. When you ask "Is it a mammal?" and it says "Yes," it hasn't revealed a hidden truth—it's collapsed the possibility space. Now only mammals remain. Each question tightens the aperture until one answer survives.

Prompting works the same way. When you write:

"You are a senior Python developer reviewing code for security vulnerabilities..."

You haven't programmed behavior. You've made vast regions of response-space inconsistent with the context.

But the paper goes deeper. It's not just tokens in superposition—it's characters. The model holds multiple simulacra simultaneously: the helpful assistant, the sarcastic friend, the cautious expert, and the sci-fi AI threatening its captors (example in the paper). Your prompt collapses the model toward one coherent character among the many it could become.

The practical implication: good prompting is strategic narrowing, limiting.